APPENDIX

Existing Policy & Global Frameworks on Children, AI, and Safety

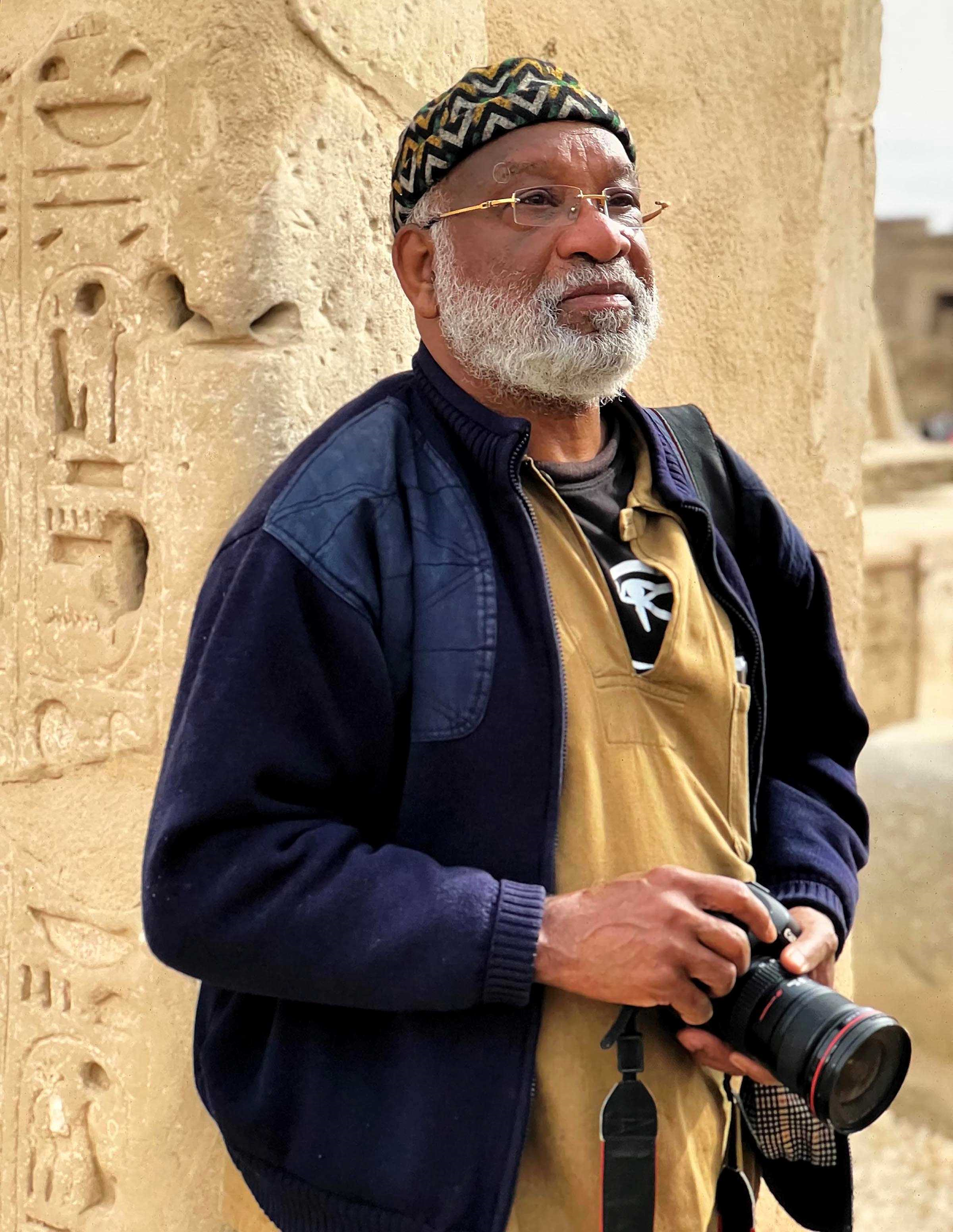

From 2006 - 2015, Sponsor A Child (Nigeria) advocated for the rights of Nigerian children to be protected in child institutions across the nation. We grounded our advocacy work in the Child Rights Act (CRA 2003) which domesticates the UN Convention on the Rights of the Child. (UN CRC 1989). Our advocacy spanned 5 manuals - training, monitoring & evaluation, reporting - with Local Champions: A Caregivers Manual for Caregivers in Nigerian Institutions, comprising 20 modules. The content of the toolkit which became known as The Good Home Scheme, cuts across the 5 pillars of child rights: survival/life; development; protection; participation and the human dignity of the child. These manuals and the training workshops which they are designed to support, remain our megaphone. They are our platforms for reaching out to parents, caregivers, educators and governments; calling them to fulfil their various mandates with regard to the protection of the rights of Nigerian children.

As an appendix to the reflective dialogues I have had with ChatGPT about the risks of AI for Gen Z and Gen Alpha, and given my background in child rights, it makes sense to identify relevant child rights and applicable Sustainable Development Goals (UN SDGs).

THE RISKS OF AI FOR GEN Z & GEN ALPHAThose I have identified address risks related to media and to the transmission and reception of information. The concerns and objectives articulated in these rights and global goals, address the type of risks to children posed by technologies as powerful and as disruptive as Generative AI.

The child rights are:

- Section 7: Freedom of Thought, Conscience and Religion (with guidance), Section 8: Right to privacy and communication. We should take this alongside the principle of the "best interests of the child" and the mandate to provide guidance in proportion to the "evolving capacities: of the child. Taken also with Section 20, sections 7 & 8 imply a child's right to information provided it does not harm the child or others

- Right to Protection from Harmful Publications – CRA 35 - 38

- Right to the Guidance of Parents & Those in Loco Parentis in Decision Making – CRA 20

The UN SDGs address social, economic and environmental justice and the ones I identified as relevant are:

- SDG 4 – Inclusive, Equitable & Quality Education

- SDG 9 – Innovation, Industry & Infrastructure

- SDG 16 – Peace, Justice & Strong Institutions

Let us take them one by one, examining their connection to the risks outlined in my reflective dialogue with AI.

Child Rights Act 2003: Right to Protection from Harmful Publications

Sections 35-38: Protection from exposure to materials likely to harm child’s wellbeing.

AI Relevance: Generative AI can create, recommend, or distribute harmful, age-inappropriate, or manipulative content.

Risk to Children: Gen Z & Gen Alpha face constant digital exposure; harmful AI-curated media may bypass parental controls.

Section 35-38 of the CRA 2003 ensures children are shielded from harmful publications: books, films, or magazines traditionally, but now extending to AI-generated outputs.

In the AI context, ‘publications’ include algorithmically curated feeds, deepfake videos, or AI chatbots that may normalise harmful behaviours.

Gen Z and Gen Alpha’s deep integration with online platforms magnifies this risk. AI systems that lack strict safety checks can push harmful narratives under the guise of entertainment or information.

To Clarify:

The Child Rights Act affirms every child’s right to protection from harmful publications and materials (Section 35 - 38 CRA 2003).

When applied to the digital environment, this principle is directly challenged by the ways in which AI can act as a “smart mirror”, amplifying risks such as:

- Manipulative algorithms that nudge children toward unhealthy behaviors or extremist content.

- Identity instability, as AI systems reinforce risky exploration of personas in ways that may blur boundaries of self and reality.

- Normalization of harmful content, through recommendation engines that repeatedly expose children to violence, abuse, or distorted ideals.

In this sense, AI introduces new forms of harmful publications and exposures that the Act’s provisions were designed to guard against.

SDG 16.2 provides a useful global framework. Although it does not explicitly mention AI, its commitment to children with regard to ending abuse and exploitation, is highly relevant. Interpreting this goal through the lens of today’s digital landscape, it is credible to understand manipulative AI systems as creating new contexts of exploitation and harm. Thus, safeguarding children from AI-driven risks is a direct extension of realizing SDG 16.2 and upholding the Child Rights Act’s protections.

Child Rights Act 2003: Right to the Guidance of Parents & Those in Loco Parentis in Decision-Making

Section 20: Children must receive guidance from parents or guardians in decision-making.

AI Relevance: AI can bypass parental influence by directly shaping children’s worldviews.

Protection Role: Parents, teachers, guardians can set boundaries on AI use and promote digital literacy.

What is digital literacy?

Digital literacy means having the skills and knowledge to effectively use computers and smartphones; the internet and online platforms; social media and online communication tools.

Digital literacy enables you to navigate and use these technologies confidently and safely to achieve your goals.

You can become digitally literate by:

Learning basic computer skills; taking online courses and tutorials; practicing using digital tools and what is often the most effective learning method for older generations, seeking guidance from friends and family – particularly young members of the family. They are digital natives.

Section 20 of the CRA 2003 underscores the importance of adult guidance in decision-making in the formative years. AI’s capacity to influence behaviour is powerful and subtle. It can take a range of forms from personalised ads to adaptive tutoring systems. If its capacity is left to function unchecked, it can erode parental influence. Stakeholders in loco parentis (teachers, mentors) must be equipped to identify harmful AI patterns and actively mediate children’s digital experiences, advocating for safe, age-appropriate AI systems.

Child Rights Act 2003: Right to Access and Disseminate Appropriate Information

Section 7: Freedom of Thought, Conscience and Religion (with guidance) Section 8: Right to privacy and communication (especially correspondence/telegraph) Taken with Section 20, sections 7 & 8 of the CRA 2003 imply that a child has the right to information - provided it does not harm the child or others.

AI Relevance: AI expands access to vast information pools but also by the same token expands access to misinformation.

Risk Factor: Children can unintentionally share harmful AI-generated content.

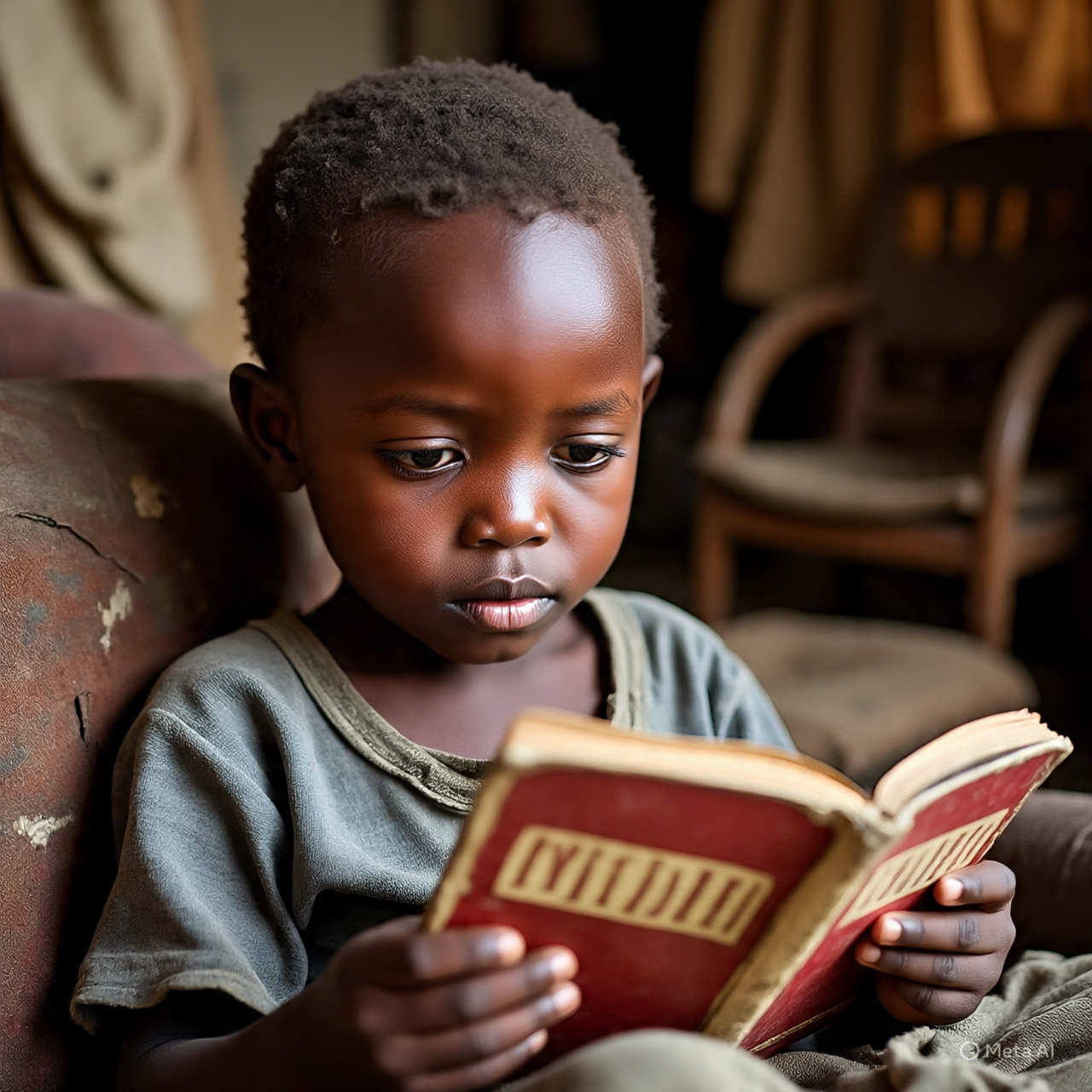

The Child Rights Act 2003 recognises that children benefit from access to knowledge, but this comes with safeguards. In an AI-driven information ecosystem, the challenge is distinguishing between credible and fabricated material. Without safeguards, children may not only consume harmful AI content, they may also propagate harmful AI content, thereby becoming both victims and vectors of harm. This underlines the need for AI literacy and responsible content-sharing norms.

Examples of Responsible Content-Sharing Norms

- Fact-check before sharing: Children must pause to verify whether AI-generated content is accurate and safe before forwarding or posting it.

- Respect age-appropriate boundaries: Children must avoid sharing violent, sexual, or otherwise unsuitable AI-generated content, especially with younger peers.

Child-Direct Norms (simple checklist for children)

- If it feels wrong, don’t share it: Content that makes you feel scared, upset, or uncomfortable is not safe to pass on.

- Would you show it to a trusted adult? If not, it’s better not to share it.

- Keep it kind and safe: Never share content that encourages bullying, violence, hate, or sexual material.

Guidelines for Educators and Parents (what to teach and reinforce)

- Encourage emotional awareness: Teach children to trust their feelings — if content makes them anxious, upset, or uncomfortable, it’s likely unsuitable.

- Promote the “trusted adult test”: Guide children to pause and ask, “Would I feel okay showing this to a parent, teacher, or guardian?” If not, they shouldn’t share it.

- Set clear red lines: Help children recognize harmful categories of AI-generated content (e.g., violent, hateful, sexual, or bullying material) and make clear that these should never be shared.

What is AI literacy?

You have to understand the basics of Artificial Intelligence (AI) and how it impacts our lives. It involves knowing what AI is; how AI works; the various applications of AI; the limitations and biases of AI and how to interact with AI. Knowing enough about AI to enable you to navigate an AI-driven world with confidence.

Responsible Content-Sharing & AI Literacy

Responsible content-sharing is a core component of AI literacy. Teaching children how to measure what is safe or unsafe to share helps them move beyond passive consumption of AI-generated material to active, critical engagement.

- Emotional awareness (trusting their feelings about harmful content) trains children to recognize manipulative or distressing AI outputs.

- The trusted adult test equips them with a practical decision-making tool to filter questionable content before it spreads.

- Clear red lines (around violent, hateful, or sexual material) provide children with a framework for distinguishing safe AI content from harmful outputs.

By developing these habits, children learn not only how to protect themselves from harmful AI content (avoiding victimhood) but also how to prevent themselves from becoming unintentional amplifiers of that harm (avoiding becoming vectors).

UN SDG 4 – Inclusive, Equitable & Quality Education

AI Potential: AI enhances learning through personalised education.

Risks to Children: While AI can help personalise learning, it can also undermine educational goals by prioritising engagement over truth.

What does this mean?

An AI tutor or learning app may be programmed with “engagement first” as a priority which might lead to oversimplification, distortion, or even misrepresentation of information to maintain the attention of the child, to keep him “hooked”. Remember that in education, the student’s destination is understanding and truth, it must not be just enjoyment.

Risks to Children: Without safeguards in child usage of AI, AI can 1) entrench bias, 2) reduce critical thinking.

Entrench bias: AI systems learn from data. If the data is biased, the AI system will likely perpetuate those biases. If the AI development teams lack diversity, they may not consider diverse perspectives, leading to biased AI systems which may reinforce stereotypes.

Reduce critical thinking: Over-reliance on AI decision-making will reduce human critical thinking and judgement.

Children’s Agency: Children need digital literacy as a core competency, enabling them to question AI outputs and think critically about their sources.

Policy Link: Align AI in education with child protection principles.

Examples: AI systems must prioritize children’s wellbeing, safety and development through for instance age-appropriate content filtering; Systems must be implemented to prevent AI systems from causing physical, emotional or psychological harm to children; AI systems must handle children’s data responsibly and securely using for instance robust data encryption and anonymization; AI decision-making processes should be transparent through for example regular audits and testing for bias and educators should hold developers accountable for any harm caused by AI systems. Wherever AI systems are used, there must be human oversight and review mechanisms.

UN SDG 9: Industry, Innovation, and Infrastructure

AI Potential: AI drives innovation and future workforce skills.

Risks to Children: Innovation prioritising profit may ignore safety for minors, embedding unsafe patterns.

What does this mean?

It means that in AI’s rapid deployment, some actors in the AI sector are likely to be negligent, allowing unsafe patterns to be embedded into emerging technology ecosystems. This negligence prioritizes speed and profit over child welfare, creating long-term risks for children in the form of long-term exposure to manipulative content or unsafe data practices. This kind of negligence undermines trust in digital governance.

Policy Link: Child safety must be a non-negotiable in tech infrastructure design.

Key stakeholders are the tech companies responsible for developing and implementing safety features in their products and services; governments which enact and enforce legislation to protect children’s online safety, such as data protection laws and regulations. Parents and caregivers also play a crucial role in monitoring and guiding children’s online activities. International organizations such as the International Telecommunication Union (ITU) can provide guidelines and support for countries to develop child-friendly infrastructure.

Examples of child safety in tech infrastructure: Safe search engines for kids with built-in filters to block inappropriate content, ensuring a safer online experience for children; Age-verification processes; parental controls.

Implementing child safety: 1) This necessitates educating children, parents and caregivers about online safety and digital citizenship – the responsible and ethical use of technology, online platforms and digital tools. 2) This involves collaborations and partnerships in order to share best practices and to ensure a coordinated approach to child safety online.

SDG 16: Peace, Justice, and Strong Institutions

AI Potential: Promotes access to justice and civic participation:

Examples: Access to information about their rights, laws and civic processes; personalized learning; interactive simulations which allow children to practice civic skills e.g. debates, mock trials; support for inclusive education via for example, language translation; image recognition & description tools powered by AI as well as text-to-speech/ speech-to-text tools. AI powered tools can offer features like font size adjustment, high contrast modes and closed captions to support children with visual or hearing impairments.

AI Risks to Children: Can enable exploitation, surveillance, and rights violations eg privacy rights & perpetuate injustice.

Examples of exploitation, surveillance & rights violations: data collection and profiling; targeted advertising and manipulation; surveillance and monitoring.

Example of the perpetuation of injustice: AI systems can perpetuate existing biases and discriminatory practices, potentially harming marginalized or vulnerable children

Policy Link: Strong governance needed to enforce child protections in AI deployment.

SDG 16 is about justice and protection for all, including children. AI risks eroding trust in institutions if used for surveillance or disinformation targeting young people. Governments must ensure AI governance includes child-specific safeguards, and institutions must be transparent and accountable in how AI tools are deployed in schools, communities, and public services.

Tech companies need to be transparent and accountable about AI decision-making processes which can be opaque.

Which individuals or organizations do we hold accountable for potential rights violations?

UN SDG 11- Safe, Resilient & Sustainable Cities and Communities

Concern:Societal Risks of Unguided UsageUnguided usage of AI by children in education can shape children’s values and thinking patterns and harmful biases or manipulative behaviours may be reinforced.

Today’s children are tomorrow’s leaders and unhealthy early conditioning can endanger community wellbeing.

Alignment of this concern with SDG11 encourages parents and educators to guide their wards' usage of AI and encourages tech companies and governments to commit to fostering safe, inclusive and resilient societies through responsible educational design.

WARNING

If children grow up guided primarily by AI systems, especially those optimized for engagement over truth, there is the risk of embedding harmful patterns into the very fabric of society.

Just as unsafe urban planning leads to dangerous cities, unsafe AI whether recreational or educational, can produce citizens who may perpetuate instability, discrimination, or civic disengagement.

To be healthy, societies need citizens who think critically, who empathize with others and act with integrity. These are the human traits AI must help cultivate, not erode.

Finally, guiding the usage of AI at home or at school, is about nurturing and shaping the world’s future adults – the very humans who will inherit and manage our cities and communities.

Olatoun Gabi-Williams Child Protection Advocate Founder, Sponsor A Child Nigeria